Yaru Liu

Hi there! I’m a third year PhD student in the Department of CST at University of Cambridge, advised by Prof. Rafal Mantiuk. My PhD research lies in the intersection field of Computer Graphics and Machine Learning. My research background includes content adaptive rendering and real-time 3D Reconstruction.

Previously, I obtained my B.Sc. degree in pure math at University of Toronto, and Msc. degree in computer engineering at McGill University, where I was gratefully supervised by Prof. Derek Nowrouzezahrai and Prof. Morgan McGuire.

Beyond research, I love business and fashion. I’ve created 3 startups with 1 exit, and am super interested in things with high technical barriers. I am driven by applicable questions, and sometimes use business to anchor my academic pursuits. Beyond that, fashion serves as my creative therapy. To me, these three don’t just coexist — they complement and elevate one another.

Feel free to reach out for collaboration🩵

News

- [Apr, 2026] Our paper, “Streaming of Rendered Content with Adaptive Frame Rate and Resolution,” has been accepted to SIGGRAPH 2026.

- [Jan, 2026] Started my internship at the NVIDIA Real-Time Graphics Group.

- [Jun, 2025] Honored to be selected as a finalist for the Qualcomm Innovation Fellowship Europe 2025.

- [May, 2025] Invited Talk: Streaming of rendered content with adaptive frame rate and resolution at the University of Cambridge.

- [Mar, 2025] Grateful to receive the 2025 Rabin Ezra Scholarship Trust as one of three UK researchers for excellence in computer graphics and visual computing.

Projects

|

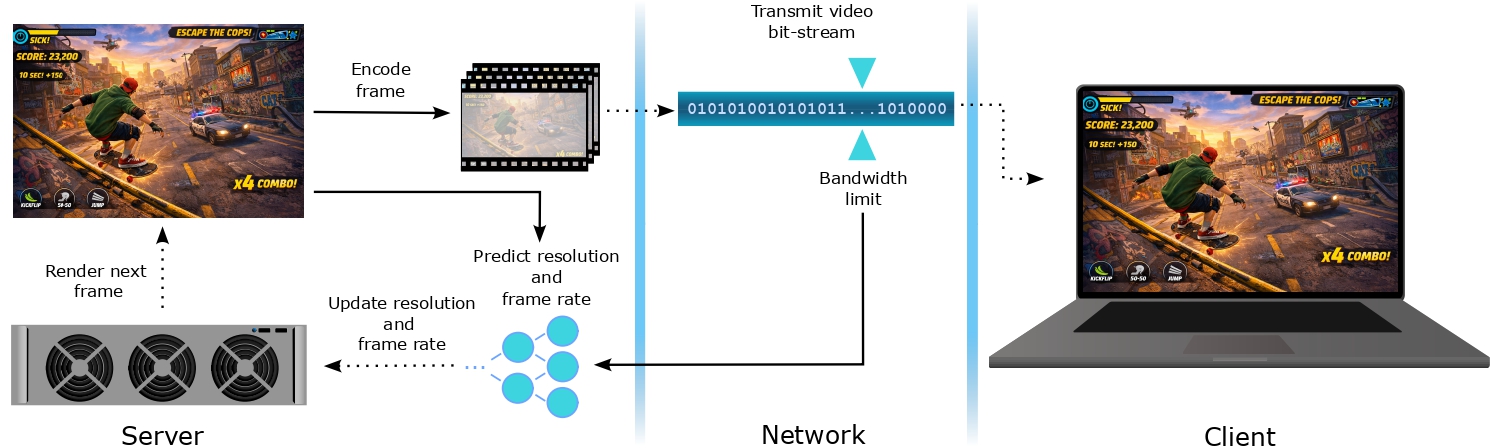

Streaming of rendered content with adaptive frame rate and resolution

Yaru Liu, Joseph March, Rafal Mantiuk Accepted to SIGGRAPH 2026 [Paper] We exploit the spatio-temporal limits of the human visual system to adaptively adjust frame rate and resolution based on scene content and motion. A lightweight neural network predicts the optimal configuration to maximize perceptual quality while minimizing rendering load under bandwidth constraints. Our approach successfully optimizes perceived quality while reducing 50%+ computational costs. |

|

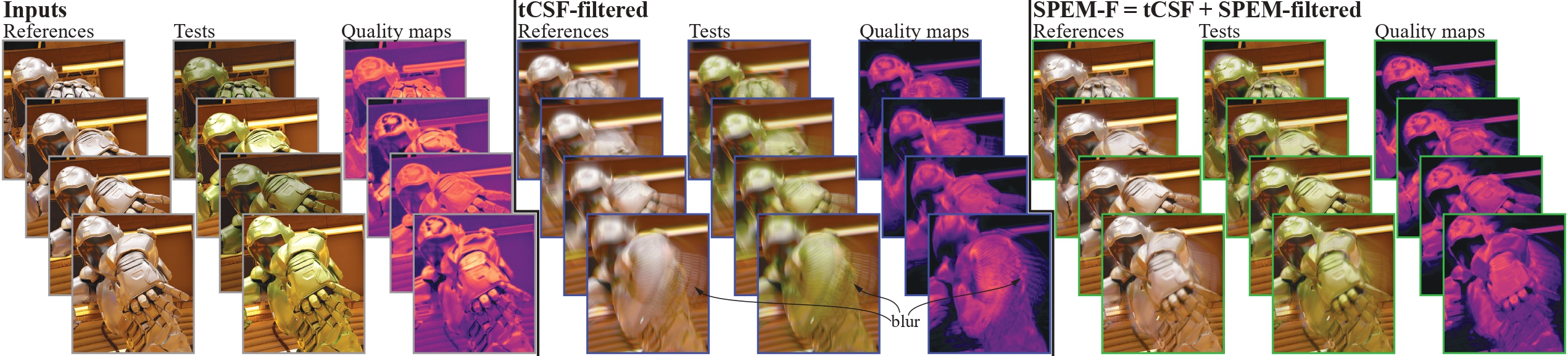

SPEM-F: Accounting for Eye Motion in Image and Video Quality Metrics

Pontus Ebelin, Yaru Liu, Niklas Sanden, Dounia Hammou, Daqi Lin, Tomas Akenine-Möller, Rafal Mantiuk, 2026 We present SPEM-F, a novel preprocessing filter that models smooth pursuit eye motion to convert any image or video metric into a perceptual video quality metric. Validated on a new 240-fps dataset, it physiologically simulates visual system latency, significantly improving prediction accuracy for temporal rendering artifacts and fast motion. |

|

M3ashy: Multi-modal material synthesis via hyperdiffusion

Chenliang Zhou, Zheyuan Hu, Alejandro Sztrajman, Yancheng Cai, Yaru Liu, Cengiz Oztireli Proceedings of the AAAI Conference on Artificial Intelligence, 2025 [Paper] This framework enables neural material synthesis utilizing hyperdiffusion to learn the distribution over material weights. It provides flexible generation guided by multi-modal inputs such as material types, text descriptions, or reference images. |

|

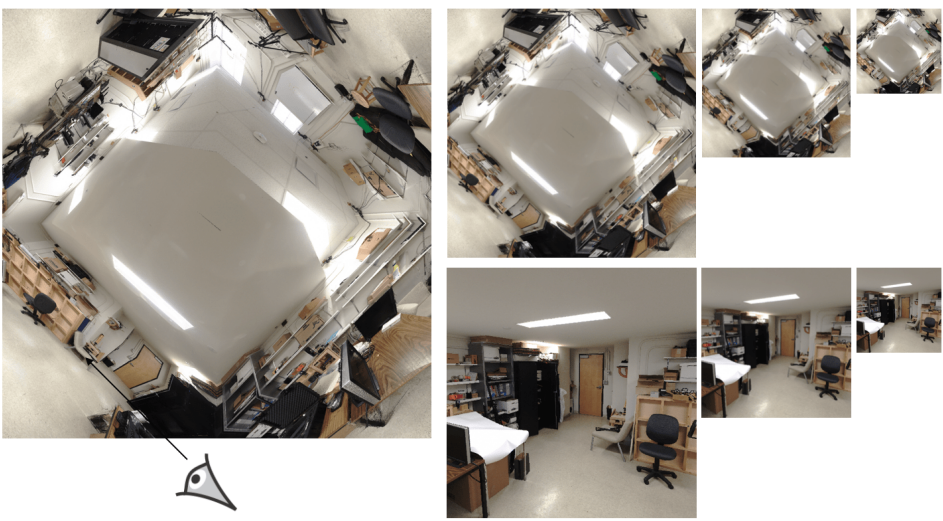

Real-Time Scene Reconstruction using Light Field Probes

Yaru Liu, Derek Nowrouzezahrai, Morgan McGuire I3D 2024, Poster [Paper] Our approach leverages sparse real-world images to generate multi-scale implicit representations of scene geometries. By introducing a novel probe data structure, we accurately capture depths to decouple rendering performance from scene complexity. |